AI in 2026: Beyond Chatbots to Latent Reasoning and Curious Agents

The "chat" window is just the interface now—the real magic is happening under the hood in the latent spaces and autonomous labs.

If 2024 was about talking and 2025 was about "thinking," 2026 is the year of Latent Reasoning and Autonomous Discovery. We aren't just building faster bots anymore; we're building entities that can navigate abstract concepts and explore the unknown.

Here’s the breakdown of what’s hitting the labs this month.

1. The DeepSeek-R1 "Mega-Update" (86-Page Blueprint)#

The DeepSeek-R1 paper just got a massive update—it ballooned from 22 to 86 pages of pure technical depth. It’s the talk of the town because it provides the most transparent look yet at how open-source models can finally rival (and sometimes beat) "black-box" proprietary models in reasoning and safety. It’s a huge win for the community-driven AI movement.

2. ByteDance’s "Latent Reasoning" Breakthrough#

The Seed team at ByteDance just dropped a paper (arXiv:2512.24617) introducing Dynamic Large Concept Models.

- The Big Idea: Instead of just predicting one word at a time, these models use "latent generative spaces" (similar to how high-end image creators like Sora work) to manipulate abstract ideas before they even start typing.

- The Result: Much deeper logic and better "world models" that don't get tripped up on complex, multi-step problems.

3. AI for Science: The "Generally Curious" Agent#

Purdue University just launched a major initiative that's making waves this January. They are building Generally Curious Agents—AI units that don't just follow instructions but are programmed to want to learn. They autonomously formulate hypotheses, design scientific experiments, and iterate on data without needing a human to give them every step. We're talking about AI as a literal scientist, not just a lab assistant.

4. The Quantum-AI Convergence#

IBM and other heavy hitters are officially moving AI into the Quantum-Ready era. We’re seeing models being co-trained with quantum simulators. This allows for exponential speed-ups in chemistry and cryptography, turning AI into a catalyst for the first real-world quantum computing applications.

5. Adversarial Multi-Agent Systems (MARL)#

On the security front, we’re seeing a new wave of Multi-Agent Reinforcement Learning (MARL) frameworks. Researchers just demonstrated that AI can now autonomously find and exploit systemic weaknesses in other AI systems. It’s a bit of a "digital arms race," forcing us to rethink AI safety from the ground up as these systems start interacting in the wild.

The Bottom Line for 2026#

We've moved into a world where AI:

- Explores on its own (Curious Agents)

- Thinks in abstractions (Latent Reasoning)

- Powers the Quantum revolution

The "chat" window is just the interface now—the real magic is happening under the hood in the latent spaces and autonomous labs.

What do you think? Are we ready for agents that are more curious than we are?

Related Posts

AI Knowledge Base: How to Chat With Your Documents

Every team has knowledge scattered across documents, meeting notes, transcripts, and files, but finding the right answer at the right moment is still harder than it should be. An AI knowledge base helps teams chat with their documents, retrieve grounded answers, and turn existing company knowledge into faster, more reliable work.

ChatGPT 5.5 Just Changed AI Workflow Automation Forever

OpenAI released GPT-5.5 on April 23, 2026. The headline is bigger benchmark scores, but the real story is more practical: AI is moving from chat responses to real work execution. For teams building with AI agents, multi-model AI, live contexts, and workflow automation, this is an important shift.

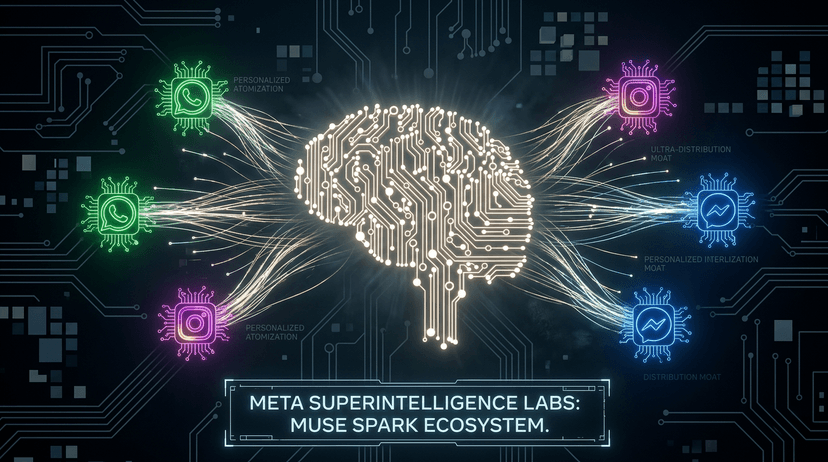

Meta Muse Spark Brings Personal AI Into WhatsApp, Instagram, and Messenger. Here's What That Changes

Meta just launched Muse Spark, its most powerful AI model yet, and its first built under the newly formed Meta Superintelligence Labs. The announcement is bigger than a benchmark release. It signals a fundamental shift in Meta's AI strategy, a new chapter in the frontier model race, and a direct challenge to how the rest of the industry thinks about personal AI. Here is what it means and why it matters to anyone building serious workflows with AI today.