Meta Muse Spark Brings Personal AI Into WhatsApp, Instagram, and Messenger. Here's What That Changes

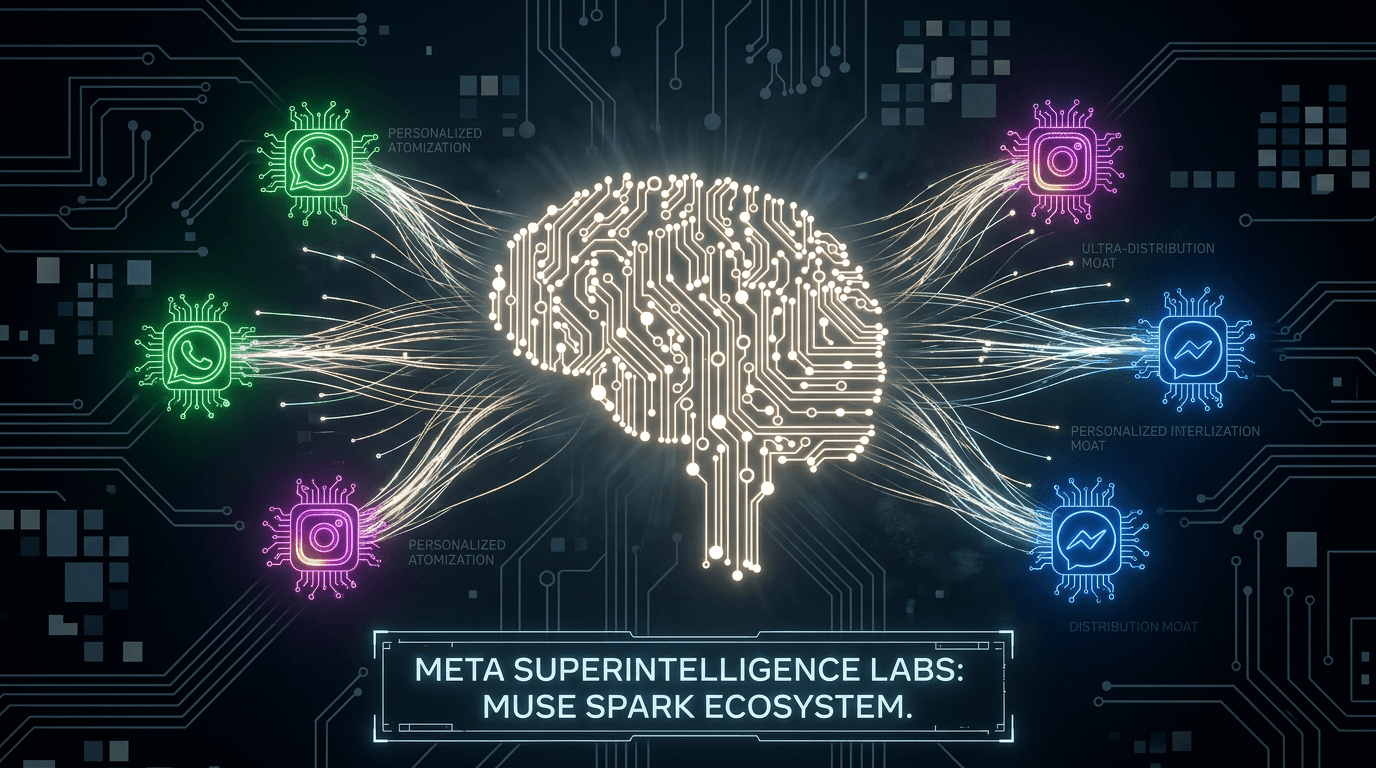

Meta just launched Muse Spark, its most powerful AI model yet, and its first built under the newly formed Meta Superintelligence Labs. The announcement is bigger than a benchmark release. It signals a fundamental shift in Meta's AI strategy, a new chapter in the frontier model race, and a direct challenge to how the rest of the industry thinks about personal AI. Here is what it means and why it matters to anyone building serious workflows with AI today.

Recently, Meta announced Muse Spark. The model came out of Meta Superintelligence Labs (MSL), the company's new dedicated research division, and it marks a significant departure from how Meta has approached AI for the past several years.

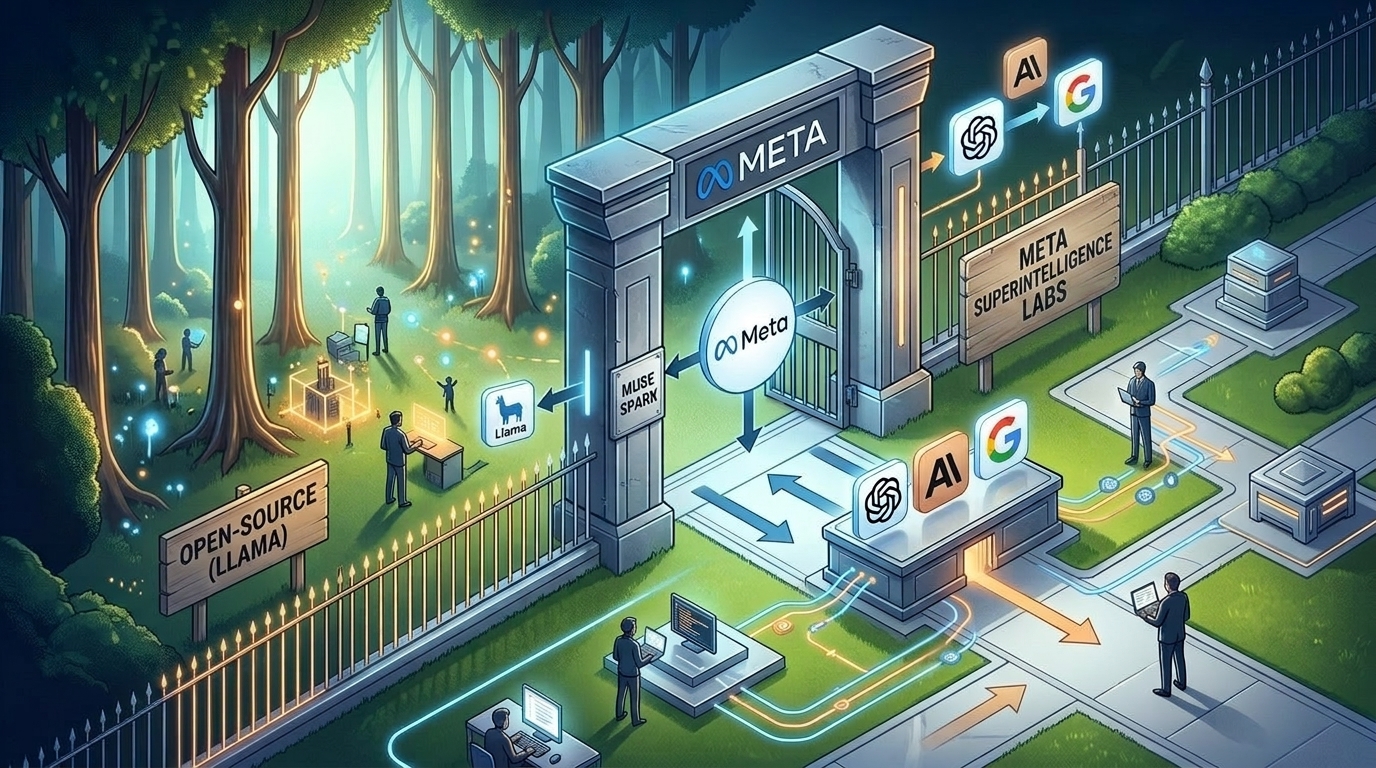

This is not another Llama release. It is not open-source. And it is not positioned as a developer tool.

Muse Spark is Meta's first direct answer to GPT-5 and Gemini - a closed, consumer-facing frontier model designed to be deeply personal, capable, and deeply integrated into Meta's 3.5 billion-user platform ecosystem.

The implications are significant for the market and for every team that is trying to build smarter AI workflows right now.

What Meta Actually Launched#

Muse Spark is the flagship model from Meta Superintelligence Labs, an organization that Meta built out over the past year with aggressive recruiting from across the industry. The lab is led by Alexandr Wang, the founder of Scale AI, who joined Meta in a high-profile move that signaled the company was serious about building toward a new category of AI capability.

The model itself is described as Meta's most capable to date, with early benchmark results placing it fourth among frontier models globally. That puts it in the same tier as the best models from OpenAI, Google, and Anthropic, a significant jump from where Meta's previous public models stood.

Key things to know about Muse Spark:

- Closed model: unlike the Llama series, Muse Spark is not open-source

- Framed around "personal superintelligence": Meta's positioning is about a model that understands you personally, not just one that answers questions

- Integrated into Meta AI: available across WhatsApp, Instagram, Messenger, and the standalone Meta AI app

- Built by MSL: a new internal lab that operates with more autonomy and a more aggressive research mandate than Meta's previous AI teams

This is Meta's reset moment for AI.

Introducing-Muse-Spark

Introducing-Muse-Spark

Why the "Personal Superintelligence" Framing Matters#

Most frontier model launches are framed around benchmarks: coding scores, math scores, reasoning benchmarks. Meta took a different angle with Muse Spark.

The company framed the launch around personal superintelligence, the idea that AI should become more useful the more it knows about you, your context, your preferences, and your goals.

That is a meaningful product and positioning bet.

It suggests Meta is not trying to win by having the fastest model or the best math scores. It is trying to win by having the AI that feels most useful to the most people, integrated into the platforms where those people already spend their time.

With 3.5 billion users across its apps, Meta has a distribution advantage that no other AI lab can match. The question is whether Muse Spark's model quality is strong enough to capitalize on that reach.

Early independent reviews suggest the model is competitive, though Gemini 3.1 Pro still holds the top spot on several benchmarks. But benchmarks are only part of the story.

The Open-Source Pivot Is the Real Story#

For years, Meta's AI strategy was defined by openness. The Llama model family became one of the most important open-source AI releases in the industry, spawning thousands of fine-tunes, products, and research projects. Meta used open-source as both a competitive strategy and a philosophical position.

Muse Spark abandons that position entirely.

This is not a minor update to the Llama line. This is a closed, proprietary frontier model that Meta is keeping to itself. The reasons are not hard to guess: frontier-level model training is expensive, the competitive stakes are higher, and a closed model gives Meta much more control over the product experience it can build on top.

But the implications for the broader ecosystem are significant.

The AI community that grew up building on Llama is now watching Meta shift its most capable model into a closed garden. Developers who relied on open Llama weights for custom applications will not have the same access to Muse Spark's architecture. And the companies that positioned themselves around open-source AI will need to reconsider what Meta's long-term product roadmap actually looks like.

This is a pivotal shift. It tells us that when the stakes are high enough, even the most committed open-source players in AI will change course.

The Llama model family built one of the most active developer communities in AI. Muse Spark signals that Meta is now prioritizing control over openness.

What the Rankings Actually Tell Us#

Early leaderboard results are important context for understanding Muse Spark's position.

Fourth place among frontier models is a meaningful debut. It means Muse Spark is genuinely competitive with the best models from the most well-resourced AI labs in the world. But it also means there is a gap.

According to independent reviews, Gemini 3.1 Pro currently leads the frontier model pack, with GPT-5 and Claude Opus 4 also placing ahead of Muse Spark on certain evaluations. That does not make Muse Spark a failure. A model in the top four globally is extraordinary by any standard, but it does mean Meta has work to do if it wants Muse Spark to become the default personal AI for its billions of users.

The interesting competitive dynamic here is that Meta is not trying to win on model quality alone. It is trying to win on the combination of model quality plus distribution plus personalization. Even a slightly lower benchmark score is less important when you can reach users through their daily WhatsApp messages and Instagram DMs.

That is a different kind of frontier competition, and it is one that could reshape who wins the AI platform race.

How This Changes the Frontier Model Landscape#

The Muse Spark launch reshapes the competitive map in a few important ways.

The "big five" are now fully formed. OpenAI, Google, Anthropic, xAI, and Meta are all now competing directly at the frontier level with closed, consumer-facing models. The age of one or two dominant labs is over. This is now a five-way race with different distribution strategies, product approaches, and target users.

Distribution is becoming a moat. Meta's 3.5 billion users represent a unique advantage that no other AI lab has. Even if Muse Spark is not the best model on benchmarks, embedding it deeply into WhatsApp and Instagram gives it more daily interactions than any standalone AI product could ever achieve.

The open-source AI community faces a new question. With Meta's flagship research now going into a closed model, the open-source AI ecosystem loses one of its most important contributors to the frontier tier. Llama will likely continue, but the direction is clear: the very best of what Meta builds will no longer be freely available.

Model choice matters more than ever. As the frontier becomes more crowded with capable, specialized models, teams need flexibility. Locking into a single provider (even a strong one) creates risk and limits what is possible.

What This Means for Teams Building with AI#

The Muse Spark launch is a reminder of something that matters deeply for anyone building serious workflows with AI today: the model landscape is changing faster than most organizations can track.

Six months ago, the frontier looked different. Six months from now, it will look different again. New labs are shipping. Rankings are shifting. Capabilities are expanding in unexpected directions.

That pace of change creates a real operational challenge.

If your team has built all of its AI workflows around a single provider, you are now exposed every time the rankings shift, every time a new model launches, and every time the provider changes pricing, availability, or terms.

The smarter approach is to build AI workflows that are model-flexible, systems where you can swap in the best model for each task without rebuilding everything from scratch.

That is exactly what separates a one-time AI experiment from a durable AI capability.

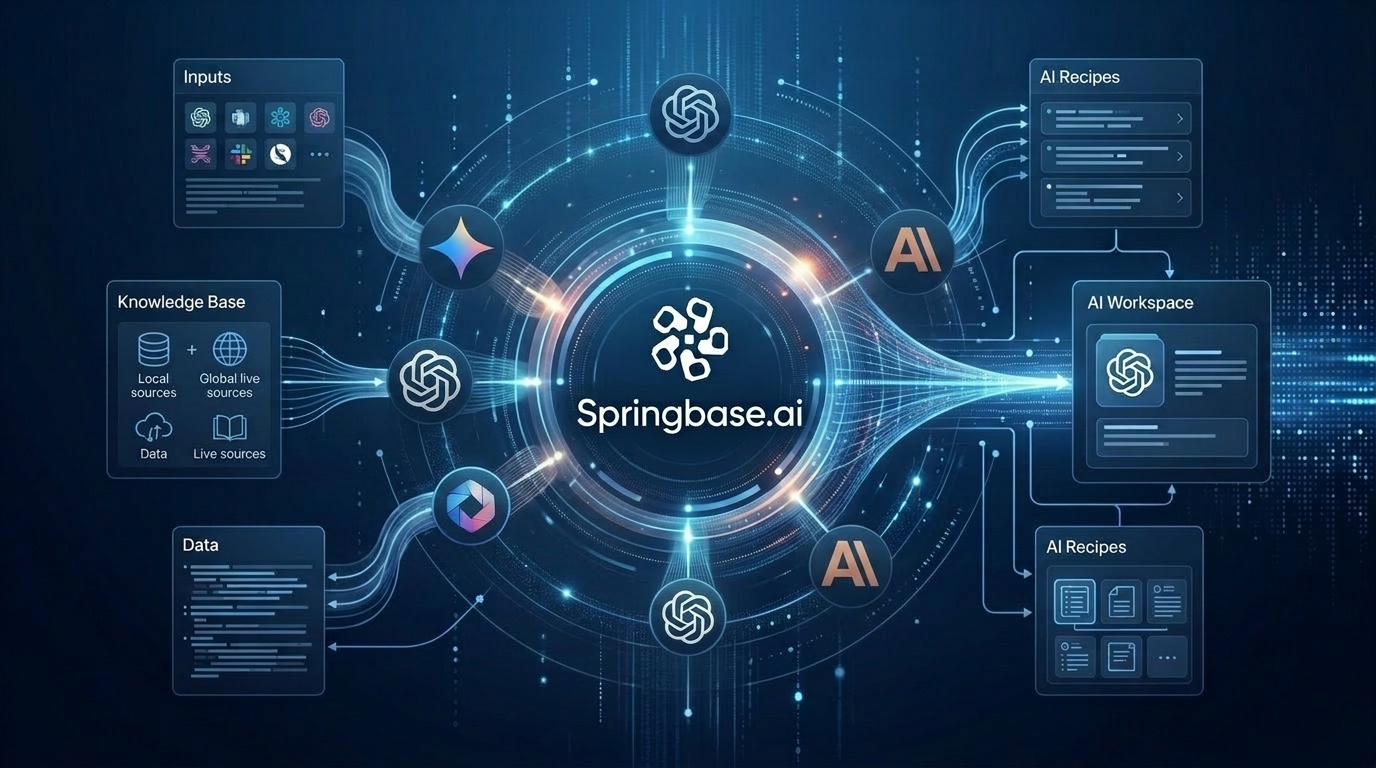

Where Springbase Fits in a Multi-Model World#

This is where ++Springbase++ connects directly to the story.

The smartest AI setup is not one model. It is one workspace that gives you access to every model.

Springbase is built for a world where the frontier is crowded and constantly moving. Instead of locking teams into a single model, it gives teams access to the best models across providers, including the frontier models from OpenAI, Google, Anthropic, and xAI, in one unified workspace.

When Muse Spark becomes available through API access, teams using Springbase will be able to run it alongside other frontier models, compare outputs, and route tasks to whichever model is best suited for the job, without rebuilding their workflows from the ground up.

That multi-model flexibility matters because:

- No single model wins every task. The best model for deep reasoning may not be the best for fast summarization or structured document extraction.

- Rankings change. What is ranked fourth today may be ranked first in six months. Model-flexible teams can adapt instantly.

- Different tasks need different context. Springbase's Knowledge Bases and Live Contexts ground any model in the specific documents, policies, and live sources your team needs, regardless of which underlying model is doing the work.

- Workflows should outlast any single model. AI Recipes and repeatable workflows built in Springbase work across models, so a model change never means starting from scratch.

The launch of Muse Spark does not make your existing AI workflows obsolete. But it does reinforce why building around a single provider is an increasingly fragile strategy.

Final Thoughts#

Meta's Muse Spark launch is one of the most significant AI announcements of 2026, not just because of the model itself, but because of what it signals about where the industry is heading.

A closed frontier model from Meta. A personal superintelligence framing. A five-way race at the frontier level. A distribution moat that no other lab can replicate.

The message is clear: AI is entering a new phase. The frontier is more competitive, more capable, and more important to everyday workflows than it has ever been.

The teams that win in this environment will not be the ones that pick the right model today and stick with it. They will be the ones that build workflows flexible enough to take advantage of the best model at any point in time.

That is the kind of AI infrastructure worth building toward. ++Start building model-flexible AI workflows at Springbase++

Related Posts

AI Knowledge Base: How to Chat With Your Documents

Every team has knowledge scattered across documents, meeting notes, transcripts, and files, but finding the right answer at the right moment is still harder than it should be. An AI knowledge base helps teams chat with their documents, retrieve grounded answers, and turn existing company knowledge into faster, more reliable work.

ChatGPT 5.5 Just Changed AI Workflow Automation Forever

OpenAI released GPT-5.5 on April 23, 2026. The headline is bigger benchmark scores, but the real story is more practical: AI is moving from chat responses to real work execution. For teams building with AI agents, multi-model AI, live contexts, and workflow automation, this is an important shift.

Claude Mythos and the Zero-Day Race: What It Means for AI Security Workflows

Anthropic’s Claude Mythos preview has sparked one of the biggest AI cybersecurity conversations of the year. The headline claim is huge: a frontier model surfaced thousands of zero-day vulnerabilities. That matters because it changes how teams think about live operational context.